Engineering

8 min read

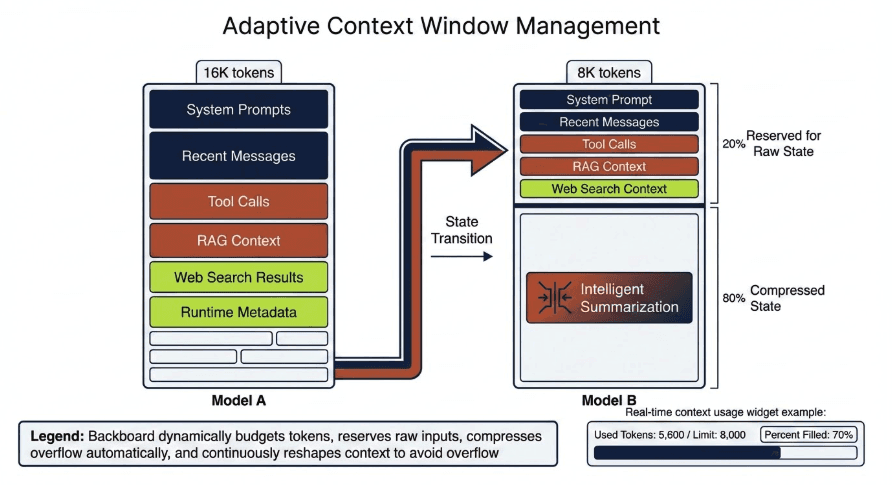

New: Adaptive Context Window Management Across 17,000+ Models

Backboard's Adaptive Context Management automatically handles conversation state when switching between 17,000+ models with different context window sizes—included for free.

Backboard now includes Adaptive Context Management, a system that automatically manages conversation state when your application moves between models with different context window sizes.

With access to 17,000+ LLMs on the platform, model switching is common. But context limits vary widely across models. What fits in one model may overflow another.

Until now, developers had to handle that manually. Adaptive Context Management removes that burden. And it's included for free with Backboard.

The Problem: Context Windows Are Inconsistent

Different models support different context window sizes. If an application starts a session on a large-context model and later routes a request to a smaller one, the total state can exceed what the new model can handle.

That state typically includes:

system prompts

recent conversation turns

tool calls and tool responses

RAG context

web search results

runtime metadata

Most platforms leave this responsibility to developers. In multi-model systems, that quickly becomes fragile.

Introducing Adaptive Context Management

Backboard now automatically handles context transitions when models change. The system works as follows:

20% of the model's context window is reserved for raw state

80% is freed through intelligent summarization

Within the 20% budget, Backboard prioritizes:

system prompt

recent messages

tool calls

RAG results

web search context

Whatever fits inside this budget is passed directly to the model. Everything else is compressed.

Intelligent Summarization

When compression is required, Backboard summarizes the remaining conversation automatically:

First we attempt summarization using the model the user is switching to.

If the summary still cannot fit, we fall back to the larger model previously in use to generate a more efficient summary.

You Should Rarely Hit 100% Context Again

Because Adaptive Context Management runs continuously during requests and tool calls, the system proactively reshapes the state before a context window is exhausted.

Developers Can See Exactly What Is Happening

We expose context usage directly in the msg endpoint:

"context_usage": { "used_tokens": 1302, "context_limit": 8191, "percent": 19.9, "summary_tokens": 0, "model": "gpt-4" }

The Bigger Idea

Backboard was designed so developers can treat models as interchangeable infrastructure. Adaptive Context Management is another step toward that goal. Applications can move freely across thousands of models while Backboard ensures the conversation state always fits.

Adaptive Context Management is available today through the Backboard API. Start building at docs.backboard.io

Rob Imbeault

ON THIS PAGE

CATEGORY

Engineering

PUBLISHED

SHARE